Models

Media and type

curl -X PUT 'http://localhost:8080/services/ilsvrc_googlenet' -d '{

"description": "image classification service",

"model": {

"repository": "/opt/models/ilsvrc_googlenet",

"init": "https://deepdetect.com/models/init/desktop/images/classification/ilsvrc_googlenet.tar.gz",

"create_repository": true

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "ilsvrc_googlenet",

"parameters": {

"output": {

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"best":3}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'ilsvrc_googlenet'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "ilsvrc_googlenet",

"parameters": {

"input": {

"best": 3

},

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "ilsvrc_googlenet",

"time": 201

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.0911879912018776,

"last": true,

"cat": "ambulance"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/detection_600 -d '{

"description": "object detection service",

"model": {

"repository": "/opt/models/detection_600",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/detection_600.tar.gz"

},

"parameters": {"input": {"connector":"image"}},

"mllib": "caffe",

"type": "supervised"

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "detection_600",

"parameters": {

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.3,"bbox":True}

data = ["/data/example.jpg"]

sname = 'detection_600'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "detection_600",

"parameters": {

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "detection_600",

"time": 60

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.8248323798179626,

"bbox": {

"xmax": 487.2122497558594,

"ymax": 351.72113037109375,

"ymin": 521.0411376953125,

"xmin": 301.8126220703125

},

"cat": "Car"

},

{

"prob": 0.29296377301216125,

"bbox": {

"xmax": 202.32383728027344,

"ymax": 352.185791015625,

"ymin": 447.30419921875,

"xmin": 12.260007858276367

},

"cat": "Car"

},

{

"prob": 0.14670416712760925,

"bbox": {

"xmax": 534.9172973632812,

"ymax": 8.029011726379395,

"ymin": 397.7722473144531,

"xmin": 17.81478500366211

},

"cat": "Tree"

},

{

"prob": 0.13783478736877441,

"bbox": {

"xmax": 288.7256774902344,

"ymax": 335.0918273925781,

"ymin": 404.08905029296875,

"xmin": 156.46469116210938

},

"cat": "Van"

},

{

"prob": 0.13422219455242157,

"last": true,

"bbox": {

"xmax": 156.6908416748047,

"ymax": 150.88865661621094,

"ymin": 346.5965881347656,

"xmin": 21.79889488220215

},

"cat": "Tree"

}

],

"uri": "/data/example.jpg"

}

]

}

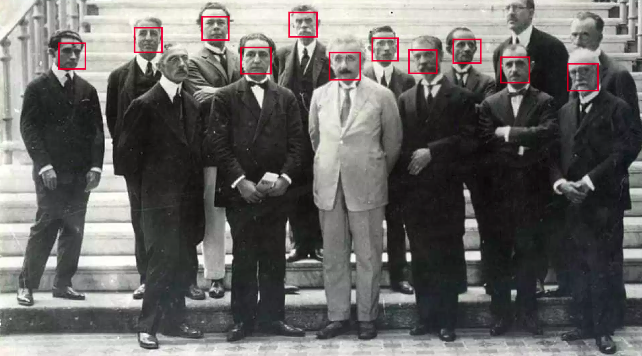

}curl -X PUT http://localhost:8080/services/faces -d '{

"description": "face detection service",

"model": {

"repository": "/opt/models/faces",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/faces_512.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "faces",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "bbox": True}

data = ["/data/example.jpg"]

sname = 'faces'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "faces",

"parameters": {

"input": {}

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "faces",

"time": 46

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.390458345413208,

"bbox": {

"xmax": 364.34820556640625,

"ymax": 50.02314376831055,

"ymin": 82.06399536132812,

"xmin": 326.3580017089844

},

"cat": "1"

},

{

"prob": 0.3900381922721863,

"bbox": {

"xmax": 271.8947448730469,

"ymax": 47.45260238647461,

"ymin": 74.53034973144531,

"xmin": 239.1929931640625

},

"cat": "1"

},

{

"prob": 0.325770765542984,

"bbox": {

"xmax": 531.6181030273438,

"ymax": 57.574459075927734,

"ymin": 82.18014526367188,

"xmin": 501.7938232421875

},

"cat": "1"

},

{

"prob": 0.23247282207012177,

"bbox": {

"xmax": 230.73373413085938,

"ymax": 16.717960357666016,

"ymin": 38.75651931762695,

"xmin": 201.1503448486328

},

"cat": "1"

},

{

"prob": 0.21733301877975464,

"bbox": {

"xmax": 398.0325927734375,

"ymax": 38.843482971191406,

"ymin": 60.36002731323242,

"xmin": 371.2444152832031

},

"cat": "1"

},

{

"prob": 0.20370665192604065,

"bbox": {

"xmax": 439.99615478515625,

"ymax": 48.639259338378906,

"ymin": 74.54566955566406,

"xmin": 407.150390625

},

"cat": "1"

},

{

"prob": 0.1948963850736618,

"bbox": {

"xmax": 160.39971923828125,

"ymax": 27.83022689819336,

"ymin": 50.85374450683594,

"xmin": 132.84400939941406

},

"cat": "1"

},

{

"prob": 0.18383292853832245,

"bbox": {

"xmax": 536.7980346679688,

"ymax": 1.5278087854385376,

"ymin": 26.120481491088867,

"xmin": 472.716796875

},

"cat": "1"

},

{

"prob": 0.1603844314813614,

"last": true,

"bbox": {

"xmax": 88.8065185546875,

"ymax": 45.23637771606445,

"ymin": 68.2235107421875,

"xmin": 61.595584869384766

},

"cat": "1"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/faces_gender -d '{

"description": "face and gender detection service",

"model": {

"repository": "/opt/models/faces_gender",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/faces_gender.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "faces_gender",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "bbox": True}

data = ["/data/example.jpg"]

sname = 'faces_gender'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "faces_gender",

"parameters": {

"input": {}

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "faces_gender_v4",

"time": 379

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.9989920258522034,

"bbox": {

"xmax": 188.1786346435547,

"ymax": 95.33650970458984,

"ymin": 253.5126190185547,

"xmin": 25.546676635742188

},

"cat": "male"

},

{

"prob": 0.9103379845619202,

"bbox": {

"xmax": 398.0808410644531,

"ymax": 98.45462799072266,

"ymin": 257.0015869140625,

"xmin": 227.84500122070312

},

"cat": "male"

},

{

"prob": 0.8768270611763,

"bbox": {

"xmax": 604.056884765625,

"ymax": 96.18730926513672,

"ymin": 257.2901306152344,

"xmin": 434.84747314453125

},

"cat": "male"

},

{

"prob": 0.16960744559764862,

"last": true,

"bbox": {

"xmax": 599.8035888671875,

"ymax": 92.09048461914062,

"ymin": 260.4921569824219,

"xmin": 430.36224365234375

},

"cat": "female"

}

],

"uri": "/data/example.jpg"

}

]

}

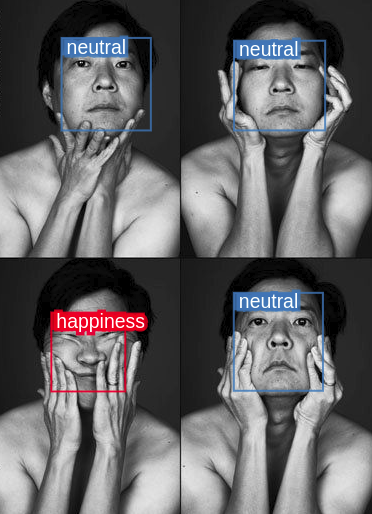

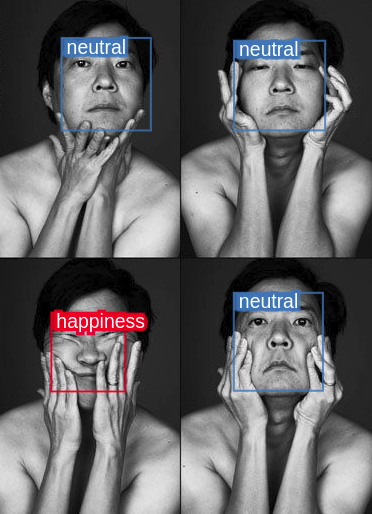

}curl -X PUT http://localhost:8080/services/faces_emo -d '{

"description": "face emotion detection service",

"model": {

"repository": "/opt/models/faces_emo",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/faces_emo.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "faces_emo",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "bbox": True}

data = ["/data/example.jpg"]

sname = 'faces_emo'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "faces_emo",

"parameters": {

"input": {}

"output": {

"confidence_threshold": 0.4,

"bbox": true

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "faces_emo",

"time": 33

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.851776659488678,

"bbox": {

"xmax": 322.82733154296875,

"ymax": 40.19896697998047,

"ymin": 132.21400451660156,

"xmin": 236.5441436767578

},

"cat": "neutral"

},

{

"prob": 0.8290548920631409,

"bbox": {

"xmax": 146.94943237304688,

"ymax": 35.533573150634766,

"ymin": 132.1978302001953,

"xmin": 59.38333511352539

},

"cat": "neutral"

},

{

"prob": 0.7160714268684387,

"last": true,

"bbox": {

"xmax": 321.46368408203125,

"ymax": 294.2980651855469,

"ymin": 390.796875,

"xmin": 235.3292694091797

},

"cat": "neutral"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT 'http://localhost:8080/services/age_real' -d '{

"description": "age estimation service",

"model": {

"repository": "/opt/models/age_real",

"init": "https://deepdetect.com/models/init/desktop/images/classification/age_real.tar.gz",

"create_repository":true

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "age_real",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.05,

"best": 1

},

"mllib": {

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.05, "best":1}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'age_real'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "age_real",

"parameters": {

"input": {

"confidence_threshold": 0.05,

"best": 1

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "age_real",

"time": 993

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.0911879912018776,

"last": true,

"cat": "24"

}

],

"uri": "/data/example.jpg"

}

]

}

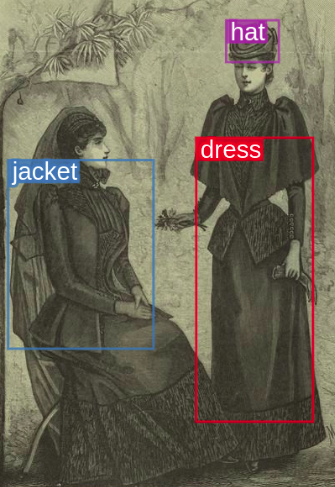

}curl -X PUT 'http://localhost:8080/services/basic_fashion' -d '{

"description": "clothes detection service",

"model": {

"repository": "/opt/platform/models/basic_fashion",

"init": "https://www.deepdetect.com/models/init/desktop/images/detection/basic_fashion_v2.tar.gz",

"create_repository":true

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "basic_fashion",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"bbox": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_output = {"confidence_threshold":0.4, "bbox":True}

data = ["/data/example.jpg"]

sname = 'basic_fashion'

classif = dd.post_predict(sname,data,{},{},parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "basic_fashion",

"parameters": {

"output": {

"confidence_threshold": 0.4,

"bbox": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "basic_fashion_v2",

"time": 103

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.283363938331604,

"bbox": {

"xmax": 145.92205810546875,

"ymax": 173.904541015625,

"ymin": 348.5083923339844,

"xmin": 9.65214729309082

},

"cat": "bag"

},

{

"prob": 0.2829309105873108,

"bbox": {

"xmax": 285.46868896484375,

"ymax": 15.518260955810547,

"ymin": 63.17625427246094,

"xmin": 220.40829467773438

},

"cat": "hat"

},

{

"prob": 0.1963716745376587,

"bbox": {

"xmax": 270.4206848144531,

"ymax": 60.39577102661133,

"ymin": 78.02435302734375,

"xmin": 232.13482666015625

},

"cat": "glasses"

},

{

"prob": 0.1955023854970932,

"last": true,

"bbox": {

"xmax": 313.3597106933594,

"ymax": 144.37893676757812,

"ymin": 412.1800231933594,

"xmin": 197.72695922851562

},

"cat": "bag"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/classification_21k -d '{

"description": "generic image classification service",

"model": {

"repository": "/opt/models/classification_21k",

"init":"https://deepdetect.com/models/init/desktop/images/classification/classification_21k.tar.gz",

"create_repository": true

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "classification_21k",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "best": 3}

data = ["/data/example.jpg"]

sname = 'classification_21k'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "classification_21k",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "classification_21k",

"time": 41

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.073883056640625,

"cat": "Atrium"

},

{

"prob": 0.06305904686450958,

"cat": "Library"

},

{

"prob": 0.06247774139046669,

"cat": "Roof"

},

{

"prob": 0.03711871802806854,

"cat": "Proton accelerator"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/classification_5k -d '{

"description": "generic image classification service",

"model": {

"repository": "/opt/models/classification_5k",

"init":"https://deepdetect.com/models/init/desktop/images/classification/classification_5k.tar.gz",

"create_repository": true

},

"mllib": "tensorflow",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "classification_5k",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "best": 3}

data = ["/data/example.jpg"]

sname = 'classification_5k'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "classification_5k",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "classification_5k",

"time": 41

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.06305904686450958,

"cat": "Library"

},

{

"prob": 0.06247774139046669,

"cat": "Roof"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/faces_embedded -d '{

"description": "Faces embedded",

"model": {

"repository": "/opt/models/faces_embedded",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_ssd_faces.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "faces_embedded",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "bbox":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'faces_embedded'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "faces_embedded",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"bbox": true

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "faces_embedded",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/faces_embedded -d '{

"description": "Faces embedded",

"model": {

"repository": "/opt/models/faces_embedded",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_ssd_faces_ncnn.tar.gz"

},

"mllib": "ncnn",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "faces_embedded",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "bbox":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'faces_embedded'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "faces_embedded",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"bbox": true

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "faces_embedded",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/nsfw -d '{

"description": "nsfw classification service",

"model": {

"repository": "/opt/models/nsfw",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/classification/nsfw.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "nsfw",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.1

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.1}

data = ["/data/example.jpg"]

sname = 'nsfw'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "nsfw",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.1,

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "nsfw",

"time": 45

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.8330243825912476,

"cat": "ok"

},

{

"prob": 0.16697560250759125,

"last": true,

"cat": "nsfw"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/places -d '{

"description": "places classification service",

"model": {

"repository": "/opt/models/places",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/classification/places.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "places",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.5}

data = ["/data/example.jpg"]

sname = 'places'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "places",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "places",

"time": 56

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.559813380241394,

"cat": "downtown"

},

{

"prob": 0.21302251517772675,

"cat": "skyscraper"

},

{

"prob": 0.04305418208241463,

"cat": "office_building"

},

{

"prob": 0.030694598332047462,

"last": true,

"cat": "plaza"

}

],

"uri": "/opt/platform/data/alx/deepdetect.com/places.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/segmentation_150 -d '{

"description": "object segmentation service",

"model": {

"repository": "/opt/models/segmentation_150",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/segmentation/segmentation_150.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "segmentation_150",

"parameters": {

"input": {

"segmentation": true

},

"output": {},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"segmentation":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'segmentation_150'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "segmentation_150",

"parameters": {

"input": {

"segmentation": true

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "segmentation_150",

"time": 1297

},

"body": {

"predictions": [

{

"last": true,

"imgsize": {

"width": 639,

"height": 421

},

"vals": [

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

"...",

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/sent_en -d '{

"description": "English sentiment",

"model": {

"repository": "/opt/models/sent_en",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/text/sent_en_vdcnn.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "txt"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "sent_en",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"good stuff!"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3}

parameters_mllib = {}

parameters_output = {}

data = ["good stuff!"]

sname = 'sent_en'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "sent_en",

"parameters": {

"input": {

},

"output": {

"confidence_threshold": 0.3

},

"mllib": {

"gpu": true

}

},

"data": [

"good stuff!"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "sent_en",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.897,

"last": true,

"cat": "positive"

}

],

"uri": "0"

}

]

}

}curl -X PUT http://localhost:8080/services/shufflenet -d '{

"description": "Shufflenet",

"model": {

"repository": "/opt/models/shufflenet",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/classification/shufflenet.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "shufflenet",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "best": 3}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'shufflenet'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "shufflenet",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"best": 3

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "shufflenet",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.073883056640625,

"cat": "Atrium"

},

{

"prob": 0.06305904686450958,

"cat": "Library"

},

{

"prob": 0.06247774139046669,

"cat": "Roof"

},

{

"prob": 0.03711871802806854,

"cat": "Proton accelerator"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/squeezenet -d '{

"description": "Squeezenet",

"model": {

"repository": "/opt/models/squeezenet",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/classification/squeezenet_v1.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "squeezenet",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "best": 3}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'squeezenet'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "squeezenet",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"best": 3

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "squeezenet",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.073883056640625,

"cat": "Atrium"

},

{

"prob": 0.06305904686450958,

"cat": "Library"

},

{

"prob": 0.06247774139046669,

"cat": "Roof"

},

{

"prob": 0.03711871802806854,

"cat": "Proton accelerator"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/squeezenet_ssd_voc -d '{

"description": "Squeezenet SSD",

"model": {

"repository": "/opt/models/squeezenet_ssd_voc",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_ssd_voc.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "squeezenet_ssd_voc",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "bbox":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'squeezenet_ssd_voc'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "squeezenet_ssd_voc",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"bbox": true

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "squeezenet_ssd_voc",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/squeezenet_ssd_voc -d '{

"description": "Squeezenet SSD",

"model": {

"repository": "/opt/models/squeezenet_ssd_voc",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_ssd_voc_ncnn.tar.gz"

},

"mllib": "ncnn",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "squeezenet_ssd_voc",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3, "bbox":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'squeezenet_ssd_voc'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "squeezenet_ssd_voc",

"parameters": {

"input": {

"confidence_threshold": 0.3,

"bbox": true

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "squeezenet_ssd_voc",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/vgg16 -d

{

"description": "generic image classification service",

"model": {

"repository": "/opt",

"init":"https://deepdetect.com/models/init/desktop/images/classification/vgg16.tar.gz",

"create_repository": true,

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}curl -X POST 'http://localhost:8080/predict' -d '{

"service": "vgg16",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold": 0.4, "best": 3}

data = ["/data/example.jpg"]

sname = 'vgg16'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "vgg16",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.4,

"best": 3

},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "vgg16",

"time": 41

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.073883056640625,

"cat": "Atrium"

},

{

"prob": 0.06305904686450958,

"cat": "Library"

},

{

"prob": 0.06247774139046669,

"cat": "Roof"

},

{

"prob": 0.03711871802806854,

"cat": "Proton accelerator"

}

],

"uri": "/data/example.jpg"

}

]

}

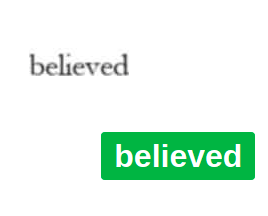

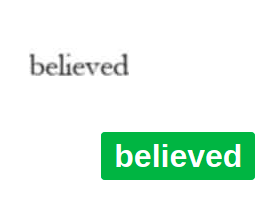

} multiword_ocr

multiword_ocr

Multiple words OCR, use `word_detect` model to first text in images and pass crops to this model

Desktop, Ocr, Caffe

curl -X PUT http://localhost:8080/services/word_ocr -d '{

"description": "Word ocr",

"model": {

"repository": "/opt/models/multiword_ocr",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/ocr/multiword_ocr.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "word_ocr",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0,

"ctc": true,

"blank_label": 0

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0, "ctc":True, "blank_label": 0}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'word_ocr'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "word_ocr",

"parameters": {

"input": {

"confidence_threshold": 0,

"ctc": true,

"blank_label": 0

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "word_ocr",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/words_mnist -d '{

"description": "OCR service",

"model": {

"repository": "/opt/models/words_mnist",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/ocr/words_mnist.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "words_mnist",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0,

"ctc": true,

"blank_label": 0

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0, "ctc":True, "blank_label": 0}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'words_mnist'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "words_mnist",

"parameters": {

"input": {

"confidence_threshold": 0,

"ctc": true,

"blank_label": 0

},

"output": {},

"mllib": {}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "words_mnist",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/amazon_en -d '{

"description": "Product sentiment",

"model": {

"repository": "/opt/models/amazon_en",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/text/amazon_polarity_vdcnn.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "txt"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "amazon_en",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"good stuff!"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3}

parameters_mllib = {}

parameters_output = {}

data = ["good stuff!"]

sname = 'amazon_en'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "amazon_en",

"parameters": {

"input": {

},

"output": {

"confidence_threshold": 0.3

},

"mllib": {

"gpu": true

}

},

"data": [

"good stuff!"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "amazon_en",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.897,

"last": true,

"cat": "positive"

}

],

"uri": "0"

}

]

}

}curl -X PUT http://localhost:8080/services/word_detect -d '{

"description": "Word detection",

"model": {

"repository": "/opt/models/word_detect",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/word_detect_v2.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "word_detect",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {"confidence_threshold":0.3,"bbox":True}

parameters_mllib = {}

parameters_output = {}

data = ["/data/example.jpg"]

sname = 'word_detect'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "word_detect",

"parameters": {

"input": {

"confidence_threshold": 0,

},

"output": {

"confidence_threshold": 0.3,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "word_detect",

"time": 17

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 1,

"last": true,

"cat": "believed"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/generic_detect_v2 -d '{

"description": "generic object detection service",

"model": {

"repository": "/opt/models/generic_detect_v2",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/generic_detect_v2.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.5,"bbox":True}

data = ["/data/example.jpg"]

sname = 'generic_detect_v2'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "generic_detect_v2",

"time": 50

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.7916921973228455,

"bbox": {

"xmax": 494.16363525390625,

"ymax": 24.178077697753906,

"ymin": 411.2965087890625,

"xmin": 97.42479705810547

},

"cat": "1"

},

{

"prob": 0.6092143058776855,

"bbox": {

"xmax": 411.5879211425781,

"ymax": 83.2486801147461,

"ymin": 146.58810424804688,

"xmin": 306.9629211425781

},

"cat": "1"

},

{

"prob": 0.5768523812294006,

"bbox": {

"xmax": 295.932861328125,

"ymax": 227.992919921875,

"ymin": 380.48736572265625,

"xmin": 162.4053192138672

},

"cat": "1"

},

{

"prob": 0.57443767786026,

"last": true,

"bbox": {

"xmax": 621.4046020507812,

"ymax": 170.29580688476562,

"ymin": 416.793212890625,

"xmin": 477.0945129394531

},

"cat": "1"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/generic_detect_v2 -d '{

"description": "generic object detection service",

"model": {

"repository": "/opt/models/generic_detect_v2",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_ssd_generic_detect_v2.tar.gz"

},

"mllib": "caffe",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.5,"bbox":True}

data = ["/data/example.jpg"]

sname = 'generic_detect_v2'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "generic_detect_v2",

"time": 50

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.7916921973228455,

"bbox": {

"xmax": 494.16363525390625,

"ymax": 24.178077697753906,

"ymin": 411.2965087890625,

"xmin": 97.42479705810547

},

"cat": "1"

},

{

"prob": 0.6092143058776855,

"bbox": {

"xmax": 411.5879211425781,

"ymax": 83.2486801147461,

"ymin": 146.58810424804688,

"xmin": 306.9629211425781

},

"cat": "1"

},

{

"prob": 0.5768523812294006,

"bbox": {

"xmax": 295.932861328125,

"ymax": 227.992919921875,

"ymin": 380.48736572265625,

"xmin": 162.4053192138672

},

"cat": "1"

},

{

"prob": 0.57443767786026,

"last": true,

"bbox": {

"xmax": 621.4046020507812,

"ymax": 170.29580688476562,

"ymin": 416.793212890625,

"xmin": 477.0945129394531

},

"cat": "1"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/generic_detect_v2 -d '{

"description": "generic object detection service",

"model": {

"repository": "/opt/models/generic_detect_v2",

"create_repository": true,

"init":"https://deepdetect.com/models/init/embedded/images/detection/squeezenet_generic_detect_v2.tar.gz"

},

"mllib": "ncnn",

"type": "supervised",

"parameters": {

"input": {

"connector": "image"

}

}

}'curl -X POST 'http://localhost:8080/predict' -d '{

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.5,"bbox":True}

data = ["/data/example.jpg"]

sname = 'generic_detect_v2'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "generic_detect_v2",

"parameters": {

"input": {},

"output": {

"confidence_threshold": 0.5,

"bbox": true

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "generic_detect_v2",

"time": 50

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.7916921973228455,

"bbox": {

"xmax": 494.16363525390625,

"ymax": 24.178077697753906,

"ymin": 411.2965087890625,

"xmin": 97.42479705810547

},

"cat": "1"

},

{

"prob": 0.6092143058776855,

"bbox": {

"xmax": 411.5879211425781,

"ymax": 83.2486801147461,

"ymin": 146.58810424804688,

"xmin": 306.9629211425781

},

"cat": "1"

},

{

"prob": 0.5768523812294006,

"bbox": {

"xmax": 295.932861328125,

"ymax": 227.992919921875,

"ymin": 380.48736572265625,

"xmin": 162.4053192138672

},

"cat": "1"

},

{

"prob": 0.57443767786026,

"last": true,

"bbox": {

"xmax": 621.4046020507812,

"ymax": 170.29580688476562,

"ymin": 416.793212890625,

"xmin": 477.0945129394531

},

"cat": "1"

}

],

"uri": "/data/example.jpg"

}

]

}

}curl -X PUT http://localhost:8080/services/detection_201 -d '{

"description": "object detection service",

"model": {

"repository": "/opt/models/detection_201_simsearch",

"create_repository": true,

"init":"https://deepdetect.com/models/init/desktop/images/detection/detection_201_simsearch.tar.gz"

},

"parameters": {"input": {"connector":"image"}},

"mllib": "caffe",

"type": "supervised"

}'curl -X PUT http://localhost:8080/services -d

{

}curl -X POST 'http://localhost:8080/predict' -d '{

"service": "detection_201",

"parameters": {

"output": {

"confidence_threshold": 0.1,

"rois": "rois"

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}'from dd_client import DD

host = 'localhost'

port = 8080

dd = DD(host,port)

dd.set_return_format(dd.RETURN_PYTHON)

parameters_input = {}

parameters_mllib = {}

parameters_output = {"confidence_threshold":0.1,"rois":"rois"}

data = ["/data/example.jpg"]

sname = 'detection_201'

classif = dd.post_predict(sname,data,parameters_input,parameters_mllib,parameters_output)

// https://www.npmjs.com/package/deepdetect-js

var DD = require('deepdetect-js');

const dd = new DD({

host: 'localhost',

port: 8080

})

const postData = {

"service": "detection_201",

"parameters": {

"output": {

"confidence_threshold": 0.1,

"rois": "rois"

},

"mllib": {

"gpu": true

}

},

"data": [

"/data/example.jpg"

]

}

async function run() {

const predict = await dd.postPredict(postData);

console.log(predict);

}

run(){

"status": {

"code": 200,

"msg": "OK"

},

"head": {

"method": "/predict",

"service": "detection_201",

"time": 60

},

"body": {

"predictions": [

{

"classes": [

{

"prob": 0.8248323798179626,

"bbox": {

"xmax": 487.2122497558594,

"ymax": 351.72113037109375,

"ymin": 521.0411376953125,

"xmin": 301.8126220703125

},

"cat": "Car"

},

{

"prob": 0.29296377301216125,

"bbox": {

"xmax": 202.32383728027344,

"ymax": 352.185791015625,

"ymin": 447.30419921875,

"xmin": 12.260007858276367

},

"cat": "Car"

},

{

"prob": 0.14670416712760925,

"bbox": {

"xmax": 534.9172973632812,

"ymax": 8.029011726379395,

"ymin": 397.7722473144531,

"xmin": 17.81478500366211

},

"cat": "Tree"

},

{

"prob": 0.13783478736877441,

"bbox": {

"xmax": 288.7256774902344,

"ymax": 335.0918273925781,

"ymin": 404.08905029296875,

"xmin": 156.46469116210938

},

"cat": "Van"

},

{

"prob": 0.13422219455242157,

"last": true,

"bbox": {

"xmax": 156.6908416748047,

"ymax": 150.88865661621094,

"ymin": 346.5965881347656,

"xmin": 21.79889488220215

},

"cat": "Tree"

}

],

"uri": "/data/example.jpg"

}

]

}

}