Blog

Model Training and Generalization

5 March 2021

Machine learning aims at replacing hand-coded functions with models automatically trained from data. Such automation is a reasonable and certainly inevitatable trend in computer science.

The main ‘trick’ however remains in training models that generalize well to unseen data. Training a model involves a potentially large set of data dubbed the training set. It is somewhat easy for the machine, and especially for deep neural networks to fully memorize these samples.

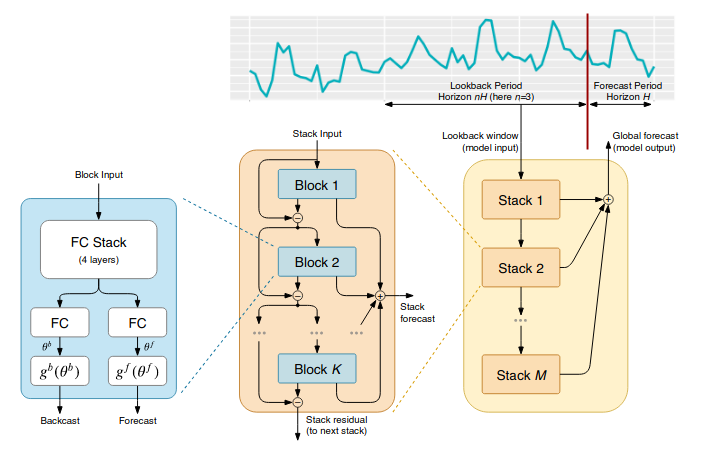

Time Series forecasting with NBEATS

26 February 2021

This is the second article on time-series with Deep Learning and DeepDetect. It shows how to use a type of deep neural network architecture named NBEATS dedicated to time-series. In our earlier post on time-series with recurrent networks and DeepDetect, we did use LSTMs with DeepDetect, and here we thus focus on more appropriate architectures.

This blog post shows how to obtain much more configurable and accurate time-series forecasting models than with other methods.

Setting up an OCR REST API with DeepDetect

19 February 2021

This article shows how to setup a REST API for an OCR system in five minutes.

Goal: setup an API endpoint to which send images and get text position and characters in return Technology: A deep neural object detector that locates text in images A deep neural OCR model that reads detected text into a character string For this, DeepDetect provides:

A REST API for Deep Learning applications Pre-trained models that are free to use A simple way to chain models so that a single API call does all the work DeepDetect setup Let’s start a ready-to-use docker image of DeepDetect server with CPU and/or GPU support.

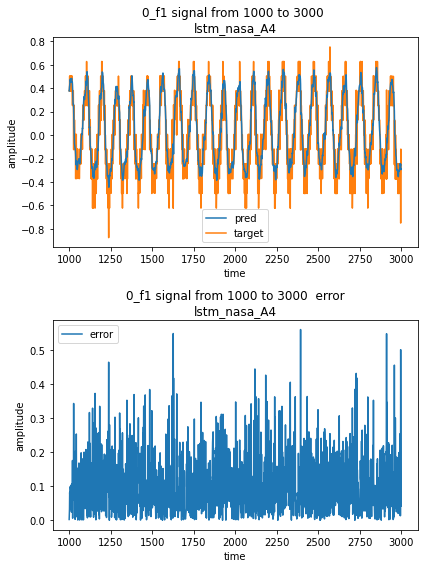

Time Series forecasting with Deep Learning using DeepDetect

12 February 2021

This article is the first of an ongoing serie on forecasting time series with Deep Learning and DeepDetect.

DeepDetect allows for quick and very powerful modeling of time series for a variety of applications, including forecasting and anomaly detection.

This serie of posts describes reproducible results with powerful deep network advances such as LSTMs, NBEATS and Transformer architectures.

The DeepDetect Open Source Server & DeepDetect Platform do come with the following features with application to time series:

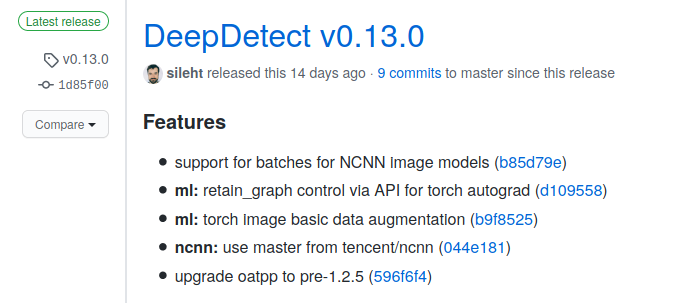

DeepDetect v0.13.0

5 February 2021

DeepDetect v0.13 was released last week, with improved multi-model chaining on ARM and CPU hardware.

This is considered useful for embedded AI applications and CPU cloud instances alike.https://t.co/i1J96Q1YR1#deeplearning #AI #ARM

— jolibrain (@jolibrain) January 29, 2021 DeepDetect release v0.13.0 DeepDetect v0.13.0 was released a couple weeks ago. Below we review the main features, fixes and additions.

In summary:

NCNN backend for efficient and lightweight inference on ARM and CPUs: Support for batches of multiple images in inference Ability to use NCNN models with multi-model chains Updated to the latest NCNN code Basic image data augmentation for vision models trained with the Torch backend Improvements to the NCNN backend NCNN is a great library for neural network inference on ARM and embedded GPU devices.

Quick Deep Learning from CSV data

29 January 2021

Automation via Deep Learning is applicable to all data types. This is due to the excellent ability of neural networks that can be trained straight from raw data. Relevant data types range from images to videos, logs, texts, cloud points and graphs.

However, in practice, most data collected for industrial purposes are in the form of spreadsheets or CSV data files. This is because CSV is a simple and convenient readable format.

Why Deep Learning with C++?

22 January 2021

Why Deep Learning with C++

At Jolibrain, we’ve been building deep learning system for over five years now. Some of us had been in the academia working on AI Planning for robotics sometimes for over a decade. There, C++ is natural fit, because the need for automation meets embedded performances.

So in 2015 C++ felt natural to us for Deep Learning, and thus DeepDetect is written with C++.

This short post is about sharing our experience, good & bad, regarding C++ for Deep Learning, as of today in early 2021.

DeepDetect v0.12.0

14 January 2021

DeepDetect release v0.12.0 DeepDetect v0.12.0 was released recently. Here we briefly review the main novel features and important release elements.

In summary:

Vision Transformers support with two new ViT light architectures Torchvision image classification models NCNN improved inference for image models State-of-the-art time-series forecasting with N-BEATS New local high-throughput REST API server with OATPP DeepDetect release v0.12 with support for Vision Transformers (ViT) for image classification, improved N-BEATS for time-series and new OATPP webserver #DeepLearning #PyTorch https://t.

DeepDetect Docker Images, Releases & CI/CD

8 January 2021

Build type STABLE DEVEL SOURCE Docker image CPU Docker image GPU Docker image GPU+TORCH Docker image GPU+TENSORRT DeepDetect Docker for GPU & CPU DeepDetect docker images are available for CPU and GPU with a range of supported backends, from Pytorch to Caffe, TensorRT, NCNN, Tensorflow, …

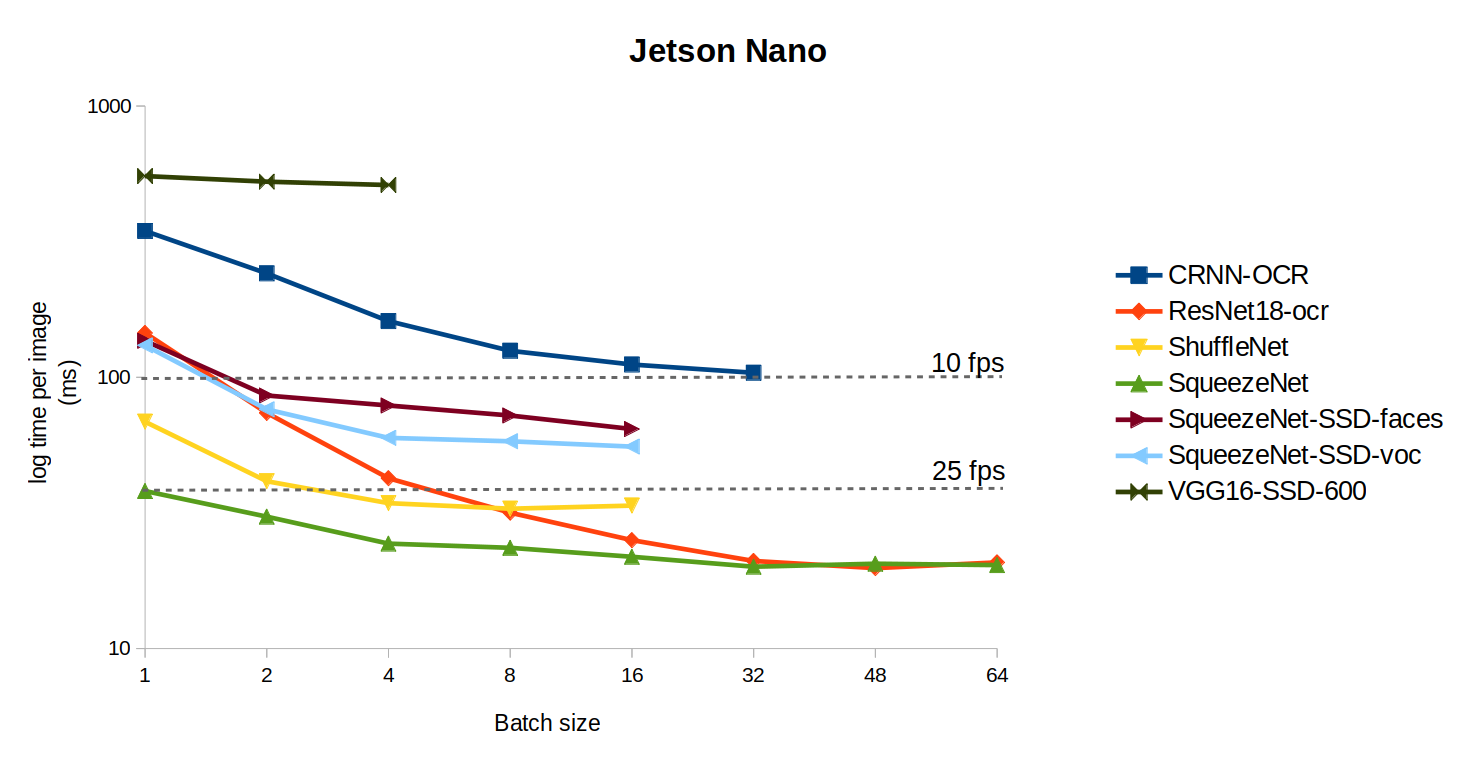

Benchmarking Deep Neural Models

30 December 2020

Benchmarking code Deep neural models can be considered as code snippets written by machines from data. Benchmarking traditional code considers metrics such as running time and memory usage. Deep models differ from traditional code when it comes to benchmarking for at least two reasons:

Constant running time: Deep neural networks run in constant FLOPs, i.e. most networks consume a fixed number of operations, whereas some hand-coded algorithms are iterative and may run an unknown but bounded number of operations before reaching a result.