Training from CSV data

The DD platform can train from CSV (and SVM) format.

Data format

Any CSV file with:

Text fields

Numeric fields

Categorical fields (i.e. a finite set of values, string or numbers, e.g. cities, postal codes, etc…)

You can specify both a training and a testing CSV or letting the DD platform shuffle and splitting the test set with the tsplit parameter.

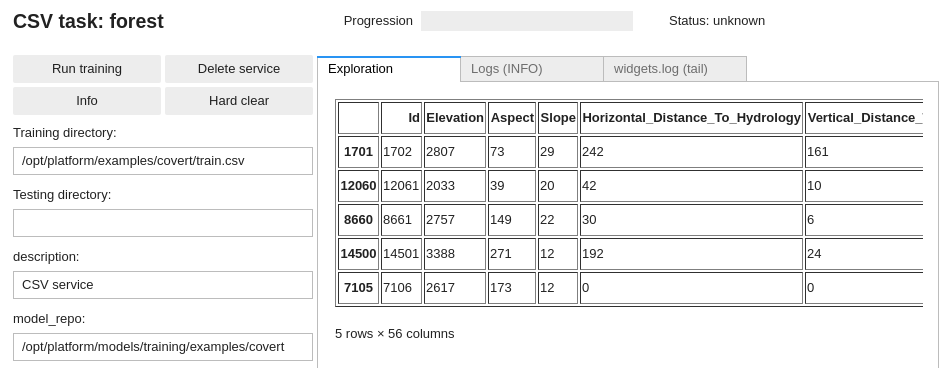

The DD platform comes with a custom Jupyter UI that allows you to review your data:

See https://deepdetect.com/tutorials/csv-training/ for some more details.

Training from CSV

DD automatically parses and manages the CSV file and data, including the handling of categorical variables and the normalization of input data.

Using the DD platform, from a JupyterLab notebook, start from the code on the right.

csv_train_job = CSV(

'forest',

host='deepdetect_training',

port=8080,

training_repo="/opt/platform/examples/covert/train.csv",

model_repo="/opt/platform/models/training/JeanDupont/covert",

csv_label='Cover_Type',

csv_id='Id',

csv_separator=',',

tsplit=0.2,

template='mlp',

layers='[150,150,150]',

activation="prelu",

nclasses=7,

scale= True,

iterations=10000,

base_lr=0.001,

solver_type="AMSGRAD"

)

csv_train_job

This prepares a training job for a 3 layers neural network (MLP) with 150 neurons in every layer.

forestis the example job name

training_repospecifies the location of the data

csv_idspecifies, when it exists, which of the CSV columns is the identifier of the samplescsv_labelspecifies which column holds the label of the samplestsplitspecifies the part of the training set used for testing (0.2 for 20%)templatesspecifies an MLP, i.e a simple neural networklayersspecifies 3 layers of 150 hidden neurons eachpreluspecifies PReLU activationsnclassesspecifies the number of classes of the problemsolver_typespecifies the optimizer, see https://deepdetect.com/api/#launch-a-training-job andsolver_typefor the many optionsbase_lrspecifies the learning rate. For finetuning object detection models, 1e-4 works well.gpuidspecifies which GPU to use, starting with number 0