Setup of an image classifier

This tutorial sets a classification service that will distinguish among 1000 different image tags, from ‘ambulance’ to ‘paddlock’, and more. It shows how to run a DeepDetect server with an image classification service based on a deep neural network pre-trained on a subset of Imagenet (ILSVRC12).

The machine learning service allows for an application to send images and to receive a set of tags describing this image in return. The tags are encoded in JSON.

The following presupposes that DeepDetect has been built & installed.

Getting the pre-trained model

Let us use a pre-trained model with the Googlenet architecture. However, this tutorial can be reproduced by using any of the other image classification models we provide.

First create a directory to host the model:

mkdir models

Assuming you are using a Docker DeepDetect, start the container:

docker run -d -p 8080:8080 -v /path/to/models:/opt/models/ jolibrain/deepdetect_cpu

And loading the pre-trained model is as simple as:

curl -X PUT 'http://localhost:8080/services/imageserv' -d

'{

"description": "image classification service",

"mllib": "caffe",

"model": {

"init": "https://deepdetect.com/models/init/desktop/images/classification/ilsvrc_googlenet.tar.gz",

"repository": "/opt/models/ilsvrc_googlenet",

"create_repository": true

},

"parameters": {

"input": {

"connector": "image"

}

},

"type": "supervised"

}

'

The call above automatically downloads and installs the GoogleNet pre-trained model. Or equivalently using the Python client:

from dd_client import DD

dd = DD(‘localhost’)

dd.set_return_format(dd.RETURN_PYTHON)

description = ‘image classification service’

mllib = ‘caffe’

model = {‘repository’:‘/opt/models/ilsvrc_googlenet’,

‘init’:‘https://deepdetect.com/models/init/desktop/images/classification/ilsvrc_googlenet.tar.gz'}

parameters_input = {‘connector’:‘image’}

parameters_mllib = {}

parameters_output = {}

dd.put_service(‘imageserv’,model,description,mllib,

parameters_input,parameters_mllib,parameters_output)

{

“status”:{

“code”:201,

“msg”:“Created”

}

}

Testing image classification

We can now pass any image filepath or URL to our new classifier service and it will produce tags along with probabilities. Here is a first example:

curl -X POST "http://localhost:8080/predict" -d '{

"service":"imageserv",

"parameters":{

"input":{

"width":224,

"height":224

},

"output":{

"best":3

}

},

"data":["ambulance.jpg"]

}'

{

"status":{

"code":200,

"msg":"OK"

},

"head":{

"method":"/predict",

"time":1398.0,

"service":"imageserv"

},

"body":{

"predictions":{

"uri":"../../main/ambulance.jpg",

"loss":0.0,

"classes":[

{"prob":0.992520809173584,"cat":"n02701002 ambulance"},

{"prob":0.007297487463802099,"cat":"n03977966 police van, police wagon, paddy wagon, patrol wagon, wagon, black Maria"},

{"prob":0.00014072071644477546,"cat":"n04336792 stretcher"}

]

}

}

}

The call asks for the three top tags for the ambulance.jpg image. The top tag with probability 0.99 is ‘ambulance’. This image is very possibly part of the training set, so this allows to verify that all is well.

We can pass more images at once:

curl -X POST "http://localhost:8080/predict" -d '{

"service":"imageserv",

"parameters":{

"input":{

"width":224,

"height":224

},

"output":{

"best":3

}

},

"data":["https://www.deepdetect.com/img/examples/cat.jpg",

"https://www.deepdetect.com/img/examples/alley-italy.jpg",

"https://www.deepdetect.com/img/examples/thai-market.jpg"]

}'

|

|

|

|---|

{

"status":{

"code":200,

"msg":"OK"

},

"head":{

"method":"/predict",

"time":3516.0,

"service":"imageserv"

},

"body":{

"predictions":[

{

"uri":"cat.jpg",

"loss":0.0,

"classes":[

{"prob":0.5992535948753357,"cat":"n02124075 Egyptian cat"},

{"prob":0.30885550379753115,"cat":"n02123045 tabby, tabby cat"},

{"prob":0.06913747638463974,"cat":"n02123159 tiger cat"}

]

},

{

"uri":"alley-italy.jpg",

"loss":0.0,

"classes":[

{"prob":0.41952434182167055,"cat":"n03899768 patio, terrace"},

{"prob":0.1190955638885498,"cat":"n03781244 monastery"},

{"prob":0.08107643574476242,"cat":"n03776460 mobile home, manufactured home"}

]

},

{

"uri":"thai-market.jpg",

"loss":0.0,

"classes":[

{"prob":0.3546510338783264,"cat":"n07760859 custard apple"},

{"prob":0.24165917932987214,"cat":"n03089624 confectionery, confectionary, candy store"},

{"prob":0.07271246612071991,"cat":"n03461385 grocery store, grocery, food market, market"}

]

}

]

}

}

Decoding the results:

- cat.jpg predicted as an ‘egyptian cat’

- alley-italy.jpg predicted as a ‘patio, terrace’

- thai-market.jpg predicted as a ‘custard apple’, ‘confectionery’ then ‘grocery store’, in that order.

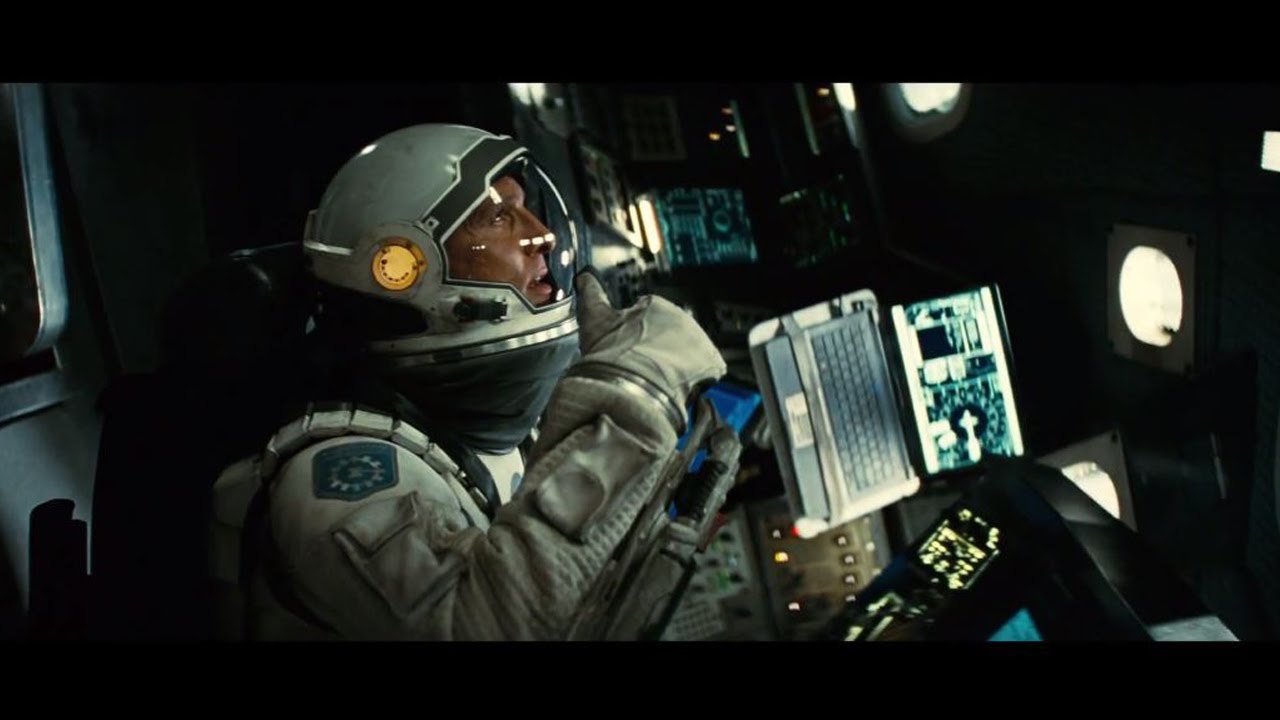

Finally, try it out with any image by providing one or more URLs of images in the data field:

curl -X POST "http://localhost:8080/predict" -d '{

"service":"imageserv",

"parameters":{

"input":{

"width":224,

"height":224

},

"output":{

"best":3

}

},

"data":["https://www.deepdetect.com/img/examples/interstellar.jpg"]

}'

{

"status":{

"code":200,

"msg":"OK"

},

"head":{

"method":"/predict",

"time":1591.0,

"service":"imageserv"

},

"body":{

"predictions":{

"uri":"http://i.ytimg.com/vi/0vxOhd4qlnA/maxresdefault.jpg",

"loss":0.0,

"classes":[

{"prob":0.24278657138347627,"cat":"n03868863 oxygen mask"},

{"prob":0.20703653991222382,"cat":"n03127747 crash helmet"},

{"prob":0.07931024581193924,"cat":"n03379051 football helmet"}

]

}

}

}

Note that the service can run indifferently on CPU or GPU.

Next steps

This is it, you can now plug this 1000 categories image classifier into the application of your choice.

The model above has good accuracy, but may be insufficient for your own purposes. In this case, you can also train your own model on your own set of images, for more targeted purposes, such as content moderation, image indexing in search engines, news categorization, etc…